News

Adobe Releases Firefly Generative AI Video Tools In Beta

The model powers new features such as Generative Extend and text-to-video, with tools also coming to Photoshop and Frame.io.

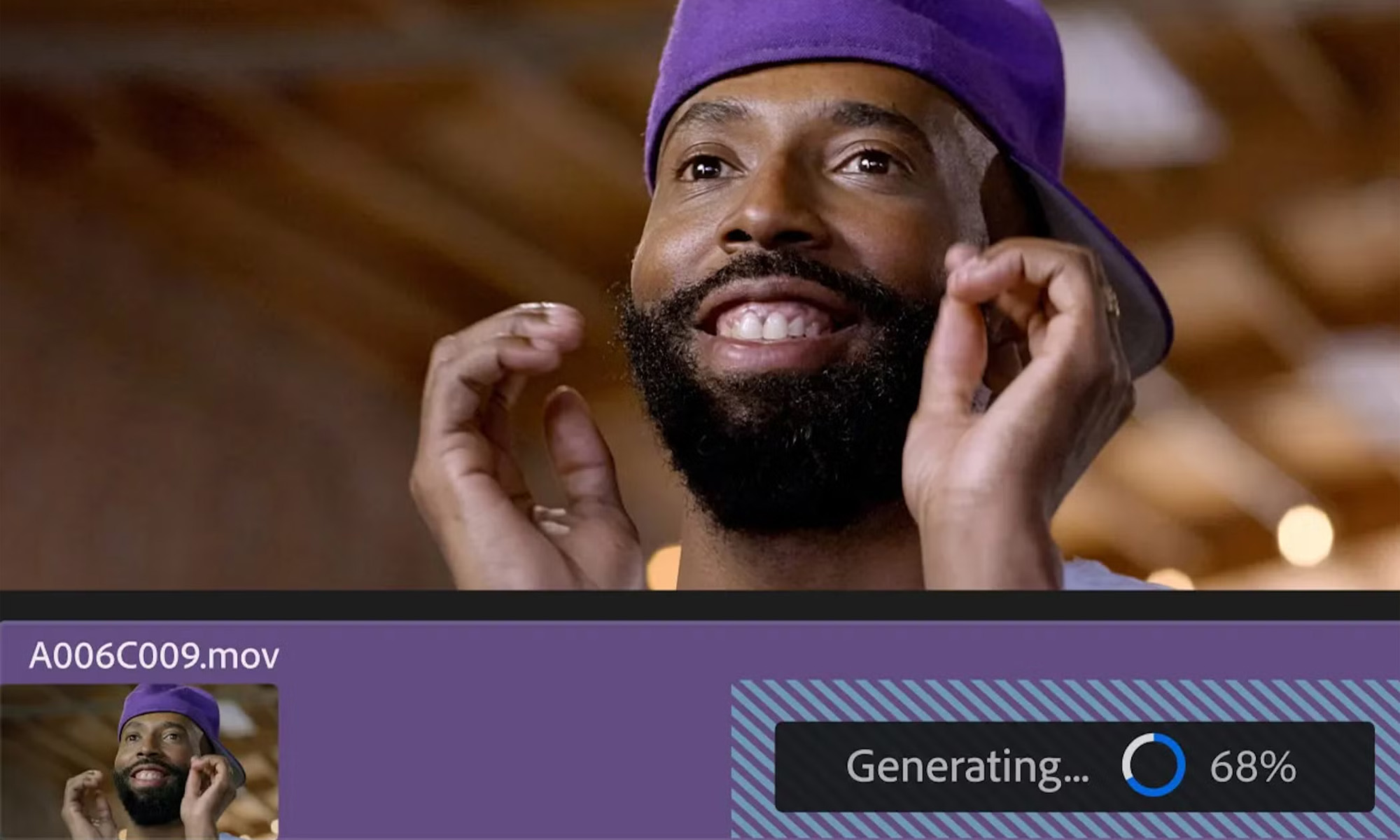

Adobe has officially entered the generative AI (GAI) arena by rolling out its Firefly Video Model, designed to power various features across the company’s extensive suite of apps. At Adobe MAX, the company announced that several of these new tools are now available in beta.

One of the standout features, known as Generative Extend, was first previewed earlier this year within Adobe Premiere Pro. The tool enables editors to add AI-generated footage or audio to either the start or end of a clip, which can be particularly helpful when a necessary shot is missing, or the transition feels incomplete.

In September, Adobe also showcased additional tools, including a text-to-video feature similar to those from OpenAI and Meta, as well as image-to-video capabilities. Both of these tools are now available in beta within the Firefly web app — though access may require joining a waitlist.

Like other Firefly models, the Firefly Video Model and its associated features are designed with commercial safety in mind. Content Credentials watermarks are automatically applied to any AI-generated content.

On the Photoshop front, Adobe has also rolled out features that were previewed earlier this year. The latest Firefly Image Model powers Generative Fill and Generative Expand, which Adobe says can create images up to four times faster than before. There’s also the new Generate Similar tool, which allows users to create alternate versions of an object in a photo until they find the ideal match.

Also Read: Best Video Streaming Services In The Middle East

Additionally, Photoshop’s Remove tool has been enhanced with a feature called Distraction Removal. This new option lets users quickly erase certain elements, such as wires, cables, or even people, from images — similar to Google’s Magic Eraser.

Finally, Frame.io V4 has launched, marking the largest update to Adobe’s collaborative platform since its debut nearly a decade ago. With a full redesign aimed at enhancing workflows and improving the video player, the update also introduces support for Canon, Nikon, and Leica’s Camera to Cloud (C2C) feature, meaning most major manufacturers now support direct uploads of photos and videos to Frame.io.

News

Samsung Smart Glasses Teased For January, Software Reveal Imminent

According to Korean sources, the new wearable will launch alongside the Galaxy S25, with the accompanying software platform unveiled this December.

Samsung appears poised to introduce its highly anticipated smart glasses in January 2025, alongside the launch of the Galaxy S25. According to sources in Korea, the company will first reveal the accompanying software platform later this month.

As per a report from Yonhap News, Samsung’s unveiling strategy for the smart glasses echoes its approach with the Galaxy Ring earlier this year. The January showcase won’t constitute a full product launch but will likely feature teaser visuals at the Galaxy S25 event. A more detailed rollout could follow in subsequent months.

Just in: Samsung is set to unveil a prototype of its augmented reality (AR) glasses, currently in development, during the Galaxy S25 Unpacked event early next year, likely in the form of videos or images.

Additionally, prior to revealing the prototype, Samsung plans to introduce…

— Jukanlosreve (@Jukanlosreve) December 3, 2024

The Galaxy Ring, for example, debuted in January via a short presentation during Samsung’s Unpacked event. The full product unveiling came later at MWC in February, and the final release followed in July. Samsung seems to be adopting a similar phased approach with its smart glasses, which are expected to hit the market in the third quarter of 2025.

A Collaborative Software Effort

Samsung’s partnership with Google has played a key role in developing the smart glasses’ software. This collaboration was first announced in February 2023, with the device set to run on an Android-based platform. In July, the companies reiterated their plans to deliver an extended reality (XR) platform by the end of the year. The software specifics for the XR device are expected to be unveiled before the end of December.

Reports suggest that the smart glasses will resemble Ray-Ban Meta smart glasses in functionality. They won’t include a display but will weigh approximately 50 grams, emphasizing a lightweight, user-friendly design.

Feature Set And Compatibility

The glasses are rumored to integrate Google’s Gemini technology, alongside features like gesture recognition and potential payment capabilities. Samsung aims to create a seamless user experience by integrating the glasses with its broader Galaxy ecosystem, starting with the Galaxy S25, slated for release on January 22.